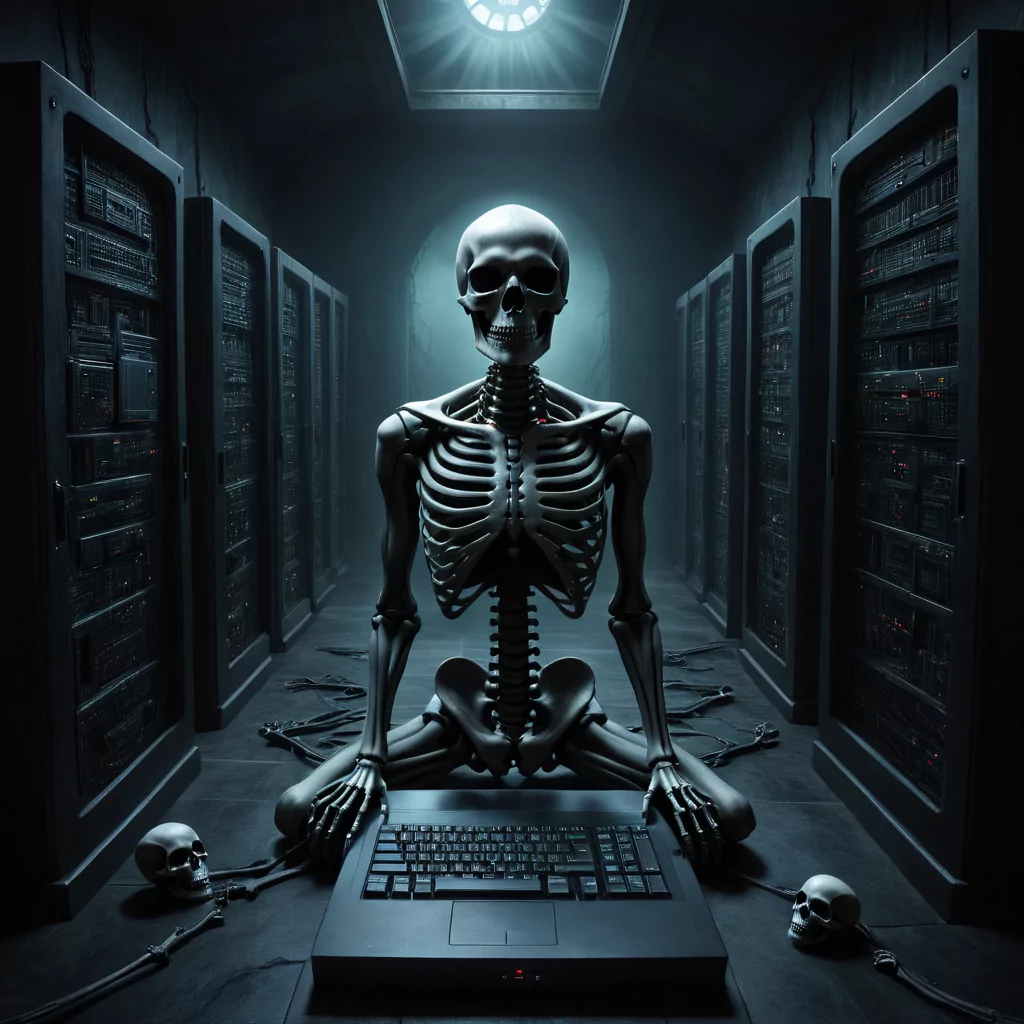

Sometimes Things That Benefit Us Can Also Be Our Downfall

Generative AI, a subset of artificial intelligence, has the remarkable ability to create new content like text, images, code, and audio, using existing data and models. This type of innovation has far-reaching applications, from content creation and data augmentation to personalization. However, it carries substantial challenges and risks for the cybersecurity landscape.

While companies can harness it for defense and threat detection, it also offers malicious hackers opportunities to enhance the sophistication and scalability of their attacks.

How Hackers Exploit Generative AI

Hackers employ generative AI in several ways to bolster their attacks:

1. Building Sophisticated Malware: Generative AI empowers hackers to craft hard-to-detect malware strains that execute their attacks. AI models enable malware to conceal its malicious intent until it carries out its harmful mission. You don’t need to know extensive coding skills to create malware when AI can create the code for you. You just need to know the correct prompts to use.

2. Elevating Phishing and Social Engineering Campaigns: Generative AI aids hackers in creating more convincing and personalized phishing emails, messages, and websites. Through natural language processing and image synthesis, they can generate content that closely mimics the style and tone of legitimate sources. Deepfake technology is also used to create fake audio or video calls, impersonating trusted individuals or organizations.

3. Exploiting Vulnerabilities and Evading Security Measures: By utilizing reinforcement learning and adversarial learning, hackers leverage generative AI to identify and exploit system and network weaknesses. Generative adversarial networks (GANs) can craft synthetic inputs that deceive biometric authentication systems, such as face or voice recognition. Seriously scary business. AI automation streamlines the process of scanning for vulnerabilities and launching attacks.

Basically AI is being used as a tool to help hackers hone their craft. But the good news is AI can help battle against it. The issue with AI is that it is fast changing and older examples of AI like cortana are being left behind.

How Companies Employ Generative AI in Their Defense

Businesses have also embraced generative AI for defense and cybersecurity enhancement, hence the double edged sword:

1. Improving Threat Detection and Response: Generative AI assists in faster and more efficient cyberattack identification and response through anomaly detection and pattern recognition. It allows companies to analyze extensive data volumes and pinpoint abnormal or malicious activities, like unauthorized access or data exfiltration. Automation plays a significant role in responding to incidents and minimizing damages.

2. Enhancing Security Testing and Auditing: Companies use generative AI to strengthen security and compliance efforts by generating synthetic data for testing and training purposes. This allows them to avoid exposing sensitive or personal information. Additionally, AI is employed to review code for vulnerabilities and errors before deployment.

We have seen AirBnb implment AI to help with risk assesment.

3. Educating and Empowering Employees: Generative AI aids in raising awareness and skills among employees through gamification and simulation. Interactive training programs teach employees to recognize and thwart cyber threats such as phishing or ransomware. Realistic scenarios test employees’ knowledge and behavior in response to cyberattacks.

The Future of Generative AI in Cybersecurity

Generative AI is literally a double-edged sword in the cybersecurity world, capable of both benefiting and harming organizations. As the technology advances and becomes more accessible, the ongoing battle between companies and hackers intensifies.

It is vital for further research and collaboration among stakeholders to ensure the ethical and responsible use of generative AI for cybersecurity. Additionally, increased regulation and oversight are necessary to prevent the misuse or abuse of generative AI by malicious actors. Balancing the potential of generative AI with its risks is a challenge that the cybersecurity community must address collectively.